It's still common to hear designers or product managers saying they'd like to run usability tests on their projects but "it just takes too much time" or that they "don't have the budget for it". If you've been reading about this topic, then you already know you don't need to have massive resources to run tests on your products, and even more importantly, the outcome heavily outweighs the investment. Steve Krug's book titled "Rocket Surgery Made Easy" kinda plays with this wrongful stereotype and it offers a practical, step-by-step guide to run a successful Usability Test.

At Subvisual, we've followed Steve's guidelines for a couple of years now and we'll share some of the tests we run, hoping that by showing practical, real use cases, you'd be more inclined to start testing your projects. In this post, we'll dive into a Usability Test for a product we built with our partners, called Crediflux.

What is Crediflux

Crediflux is a tool that helps risk managers make their decisions in credit applications.

What tools and software do I need for this?

We've recently bought a professional microphone, not only for Usability Tests, but for meetings and other potential use cases we might have for it in the future. If this will be your first time doing this, you don't need anything but your computer and software to record your screen and audio. We used Open Broadcaster, but you can use anything else that does the job. If you want to have an Observer's Room (don't worry, I'll go over this later on) you will need a TV where you can stream the live recording from the Test Room (the name is self-explanatory right?). That's it.

3 Weeks Before

Before you run a test, you need to have a clear goal of what you're going to test, how you'll test it and define the kind of user you need. All of these decisions take place 3 weeks before the Test Day.

To test a product for such a complex field as fintech, we needed to target users that followed specific requirements in order to get the most valuable results, but also we needed to have a clear idea of the most important use case of Crediflux.

Define what to test

This is one of the most important decisions you'll have to make, and probably one that generates more discussion within the team.

For a first test, you'll usually aim to test the main use case of your product, the one your users will go through on a daily basis. For Crediflux, it was unanimous that we'd need to test the way a Salesman could create, consult and conclude a credit application, but since we have 3 user types, with different levels of authority in the platform, we also wanted to test how the Decider could take charge, give their opinion and revise a credit application.

Create your list of tasks to test

After you've settled a clear goal for your test, you need to create the list of tasks to test. Keep in mind that, in order to make the best use of your testers energy and attention, the testing shouldn't take more than an hour, so be reasonable when defining the number of tasks. You want to get the most of the tester's time, without draining them, so it is recommended to aim for an hour-long test.

In our case, we ended up with 6 tasks, although we feel like we overestimated the time it would take for the testers to go through them, and we could probably have added at least 2 more. Here are a few examples of what our tasks looked like: - Salesman (D0) - Request a credit application - Salesman (D0) - Consult credit application - Salesman (D0) - Conclude credit application

Decide what kind of users to test with

In order to scan possible testers, you need to understand the core characteristics of your user group and use them as your mandatory requirements. In our case, our testers needed to have a Financial background, ages ranging from 25 to 50 years old, and have a basic knowledge of computer usage. Not mandatory, but highly valuable are users who actually worked in the credit area.

Prepare a survey to scan potential users

If you know what you're looking for, now you just need to prepare a quick survey to evaluate the applicants. At Subvisual we are big fans of Typeform, so that's the tool we'd recommend for the survey, but feel free to use anything else that gets the job done. Here's the survey we prepared for Crediflux:

Advertise for participants and mention the complimentary gift

If it is your first time doing this, you'll probably try to reach friends and family to be your testers. As long as they fit your requirements, it's fine, but you'll usually need to advertise for participants that match your user requirements. To get a good list of potential testers, you need to know where you can find your user group and find the right channel to advertise for them, but also present them with an incentive for them to give you their time - we offered a 25€ voucher on an electronics retailer. We were looking for people with a Financial background, preferably ones that worked in banks, particularly in managing credit applications, so we used Twitter and Facebook to broadcast our survey, plus we reached out to interesting potential testers through intermediaries.

Write a Script

Every test needs a script, which will be read by the Facilitator during the testing session. The Facilitator is the person who'll be running the test, guiding the tester through the tasks. I'll explain this role in depth later in this post. We always use Steve Krug's script template, and we read it word for word every time.

If you can, book 2 separate rooms

Ideally, you'll have two rooms booked for the whole day - The Test Room & The Observers Room. The first one is where you'll be running the test, with the Facilitator and the Tester, and the latter is for everyone else in the team to be following the test live, sharing notes and discussing their findings. If you can't have this, make sure you have a Test Room and everyone else can just follow the test on their own computers, with live streaming, and discuss through Slack or any other platform for team communication.

2 Weeks before

After you get the first tasks running, you'll need to get feedback and review your list of tasks to test, as well as start screening participants and taking care of the inherent logistics.

Get feedback on your list of tasks from the project team and stakeholders

It's crucial to have the team fully committed to the test, and that can only be achieved if they're in sync with the goals of the test, and corresponding tasks. Share the list of tasks with the team, invite feedback and suggestions, and make sure everyone is confident in the final result.

Write the Scenarios

For the tester to perform the tasks naturally, he'll need to be contextualized and placed in a determined scenario. You don't need anything fancy, just a basic premise for them to know their motivation to perform those tasks. Start by explaining what your product aims to do and what is the context of the test. You can give them a fake name, email address and password in case they need their credentials, for example. Then, proceed to detail the tasks but without doing a step-by-step guide of how to proceed - this is supposed to be a test, not a tutorial. Check the wording and avoid using the same expressions the tester will see in the product to be tested, so you don't steer them to go where you'd expect them to.

A few examples of our scenarios for Crediflux: - You are a salesman for a car-selling company and you just received a request for a credit application. The client is Unicer,Lda - they've been your client for over 5 years and kept a clean record. Create a new request for Unicer,Lda for 10.000€, with a 30-day payment deadline.

Start screening participants

Ideally, you'd be handpicking from over 8 potentially good testers, but that doesn't happen that often and you might need to push further and advertise more aggressively to get a sufficient number of testers that fit the bill. In our case, we had to reach out to more intermediaries to get a couple more potential testers, as we didn't have enough applications that matched all our requirements. We ended up getting an application that fitted our requirements perfectly only a few days away from the Test Day, so don't worry, there's still time and you might get some great testers applying in the days to come.

Send a "save the date" email to all participants

Make sure everyone knows exactly when the Testing Day is so they can plan ahead and show up by setting up a Calendar event, with a reminder on the previous day. By inviting everyone to the event, we can check if everyone has accepted and confirmed their presence.

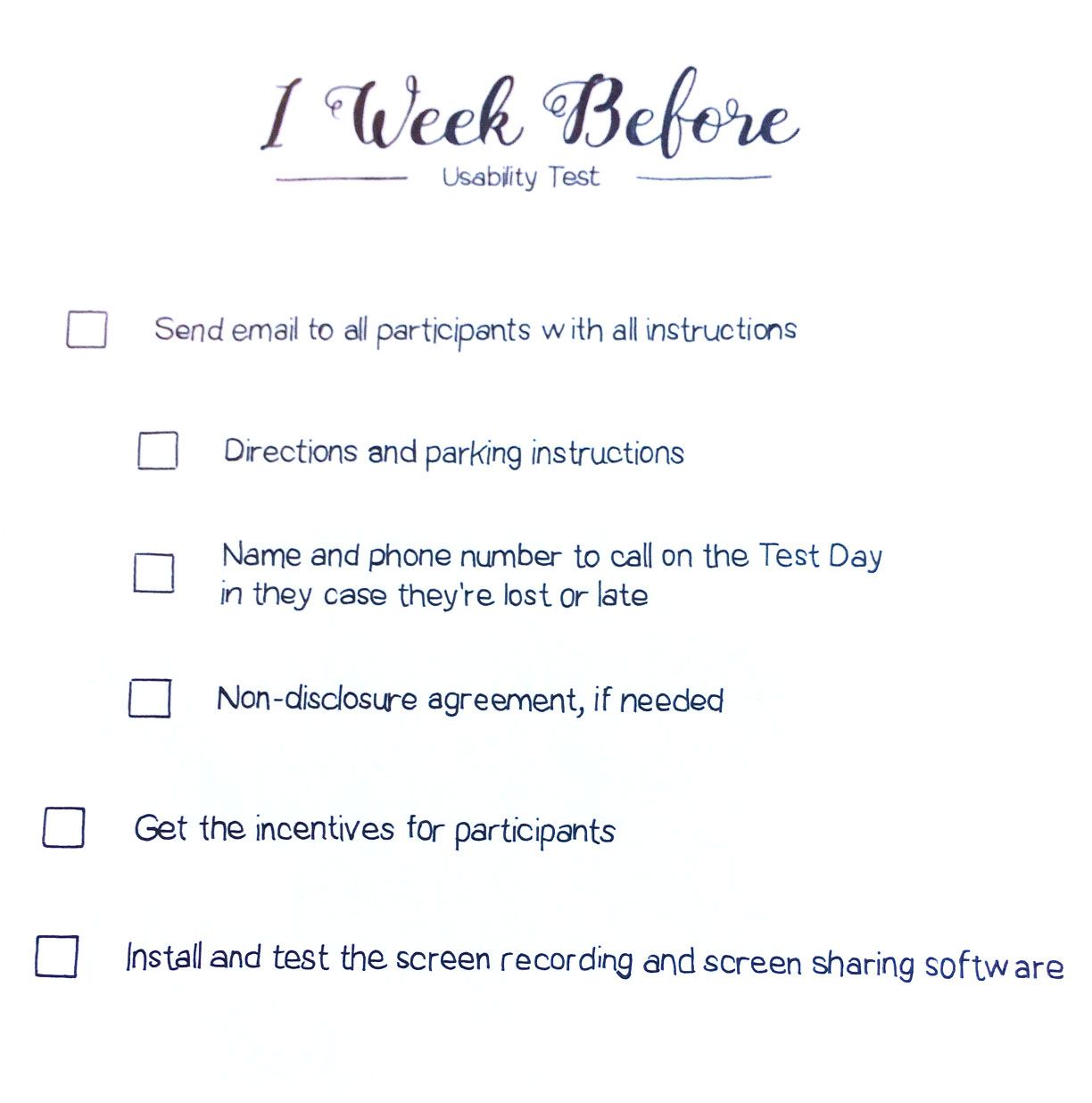

1 Week Before

Time to ultimate all details and make sure everything is ready and will go smoothly, by reviewing and testing everything.

Send email to all participants with all instructions

Send all the information the participants need to get to there on time, by following a simple checklist: - Directions and parking instructions - Name and phone number to call on the Test Day in they case they're lost or late - Non-disclosure agreement, if needed

Get the incentives for participants

Someone should be responsible for arranging the incentives you've advertised to the participants. If you're offering vouchers, you can delay this until the previous day, in case you're not sure of how many testers you'll actually have by then.

Install and test the screen recording and screen sharing software

The best tip to keep everything running smoothly on Test Day is to test and double check everything on the previous days. That means installing and testing the recording software - how and when do I start recording, how and when do I stop - and also the screen sharing software. We used YouTube live streaming feature, which has about 4 seconds of latency but doesn't really hurt the experience. If you find some other software or platform that's free and has better performance, please send us an email or comment on the end of this post - we're always looking for the most efficient tools and techniques.

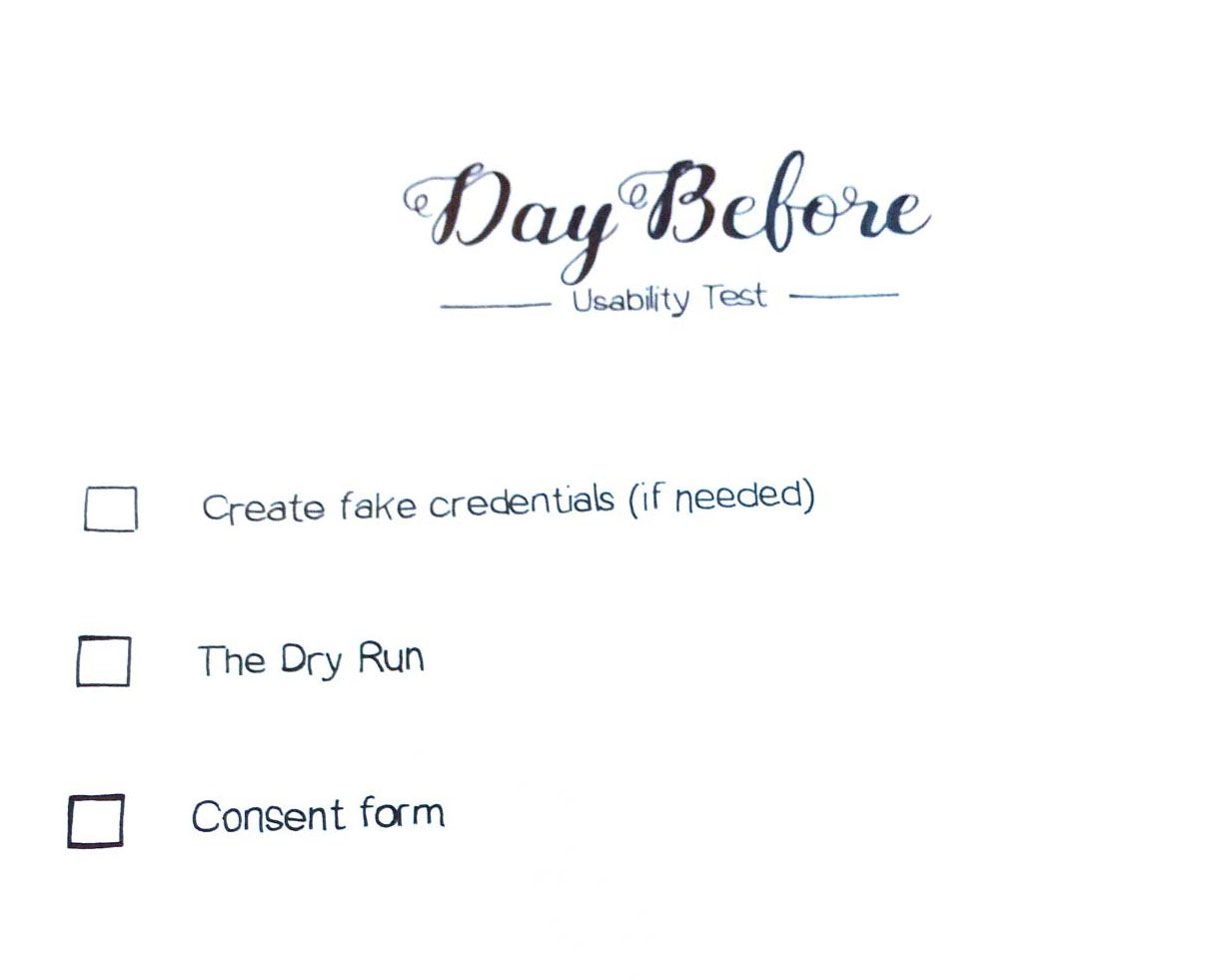

Day Before

Everyone is experiencing a little anxiety, particularly the Facilitator, and probably feeling a bit unsure about what's going to happen. Don't stress, there are only a few things you need to do the day before welcoming your testers to calm everyone down and make sure everything is ready.

Create fake credentials (if needed)

If you need the user to log in for the test, create the fake credentials in advance or allow them to make them up. Unless you're testing the login itself, you usually just want to remove that obstacle of their way.

The Dry Run

Get someone, preferably from outside the team, to do a pilot test with. The Facilitator should read every word of the script, as well as the scenarios. Be in the lookout for bugs that might ruin the use cases you're testing, loopholes in the scenarios and basically anything that might disrupt the test.

Consent form

To record someone's voice, you need to ask for their permission. For legal purposes, we highly recommend that you print a consent form and ask them to sign it before starting to record. As always, Steve Krug has you covered with a simple recording consent form template where you can just fill in the blanks, but he also has this situation perfectly covered in the script.

It's TEST DAY!

Feeling excited yet? On the Test Day, the Facilitator should be only focused on welcoming the testers in the Test room and make them feel comfortable and at ease. It's up to the rest of the team to check everything else from the tasklist. - Order lunch for the debriefing - Make sure the test computer is set and ready, with the product installed or available online - Test the screen recorder, by doing a short recording and playing it back - Disable anything that might interrupt the test (notifications for emails, messaging, calendar reminders, etc) - Make sure the speakers and microphones are working and you're recording the audio perfectly

When the testers start arriving, have someone by the door to greet them with a smile, ask them if they want a coffee or some water and take them to the Test Room. Pay close attention to the testers reactions, prompt them to think out loud at the beginning of every scenario (and during, if they forget) and take notes of everything - what went well and what didn't.

When all tests are done, gather everyone's notes and insights and debrief over what you felt were the pain points that need your attention and prioritize them. Focus on the ones that prevent or gravely harm the user experience for concluding a task. Make a list of the bugs you picked up on, but don't make them a priority unless they obviously are. Most of the times only you and the team working on the project will notice the bugs, unless they are really damaging the experience.

Once you're done debriefing and writing an organized list of problems, you're ready to start thinking about how to iterate and fix them. As soon as the team agrees on a problem, there'll immediately be a lot of ideas to solve it - which is fine and you should definitely take notes of them - but don't stick with the very first idea that popped up. We use Trello for basically everything, and it is particularly useful for this use case, when you need to create a list of problems and suggest potential solutions to solve them. The team can then discuss and comment on each card, keeping the debate organized and safe for future reference.

After that, it's time to start working on an iteration and planning for the next test. Believe me, after you've conducted a Usability Test once, you'll question yourself why you never did it before and spend the next days recommending it to everyone you know.